From long implementation project

to rapid release.

In recent years, projects have become increasingly agile. This means that longer implementation phases of six to 18 months are broken down into smaller releases that last no longer than two months. Often, planning is also done on a weekly basis. For example, in the development of our myleo / dsc logistics platform, releases are scheduled every two weeks.

In the project business, the scope is constantly evolving even in the meticulously planned waterfall. We can speculate about the reasons – lack of buy-in from the business departments at the start of the project, too little time in the requirements analysis, changing availability of key experts – but the symptom is evident and very well known.

These advantages bring fast iterations.

Faster release cycles bring many advantages. There is no need to argue with vague statements such as increased customer satisfaction, because precisely formulated arguments can be found in abundance:

- For example, faster iterations and adjustments to the project plan are possible and the risk of scope misalignment is minimized.

- In addition, features can be provided individually and users do not have to wait long for a major release. This pays off especially when the application is already running in production.

- Another aspect directed at the development organization concerns the speed of developers and the certainty that features will still work properly after a release. If a release is rolled out often (at least every four weeks, in our view), bugs will be fixed more quickly when they occur in production. This is because real or perceived bugs can be more quickly attributed to a change implemented in the past. If the faulty feature is quickly identified, the faulty location in the source code can also be found more quickly. It's like real life: If you're just looking for your key - knowing where and when you last held it, you can quickly narrow down where it might be now.

Brave new, fast world?

So, quick releases bring a number of advantages, but they also have their downsides. After all, they mean one thing above all – many, frequently implemented, small changes. This means that testing must be carried out after each release at the latest. From a commercial point of view, the question of who has to test how much and what is not insignificant.

With a SaaS solution (Software as a Service), the matter is clear – the manufacturer must take on the lion’s share,

since they specify the release cycle. Tests also play an important role in product development and project business – after all, they are the only way to verify that the software does exactly what is expected of it.

In the following, however, I will refer primarily to SaaS solutions, although the aspects definitely also have their validity when an implementation project is running.

The test pyramid from a leogistics perspective

First of all, we need to put aside the terms “developer test” and “user test” because they are too vague. In product development, there are the following tests. Here, the test pyramid should be mentioned, which should be known and is industry standard. I have summarized some test types for the sake of clarity.

The term “pyramid” probably refers to the number of tests (left) rather than test coverage (right). Purists may object that test coverage should also be a pyramid, but all too often disregard the budget situation in this consideration. In reality, test coverage (measured by both code and branch coverage) is likely to be more like a pole, with a tapered foundation and tip. But even that can get you pretty far.

However, the thrust of pure doctrine and practice is the same: automate as much as possible, because automated tests are cheaper in the long run than manual tests.

- Unit tests test individual methods, and nothing else. “Foreign” methods must be isolated and deliver reproducible outputs (stub/fake) or validate inputs reproducibly (mock, spy). Common tools from our practice are ABAP unit tests or Jest in the Node.js environment. The effort often correlates with the number of test doubles to be created. Experience shows that tests with more than three test doubles are more difficult to read, which means that the added value can quickly decrease due to increased maintenance effort. In this case, it is better to move up one level.

- Component / service tests test the “puncture” of an API from the top to the database, but no foreign modules or services. Therefore, test doubles are also necessary here. While we were still dealing with a scalpel in unit tests, we are already working with scissors here – the coverage increases, but errors can be narrowed down less precisely. The initial state of each test is not reached here via test doubles, but via a defined setup in the database – either in the physical database, delimited in a separate client (in SAP systems), or via an in-memory database started specifically for the test (e.g. www.npmjs.com/package/mongodb-memory-server). It is important to mention here that the solution with a local in-memory DB can usually be parallelized, i.e. it can also be pipelined, whereas a separate test client in SAP cannot be parallelized without major effort, since there is no separate master data setup for each test run and certainly no separate client. In this case, a nightly, serial test execution is recommended instead of a parallel test execution with race conditions. Common tools from our practice are ABAP unit tests + leogistics’ own superstructure, as well as Supertest / Jest in the Node.js environment.

- GUI / Integration / API tests test the software solution, but not necessarily other systems that are connected. Tests are automated and run directly on the UI or API. Test Doubles are manageable and are limited to simulating incoming messages, or verifying outgoing actions (e.g. www.inbucket.org/). These tests, if they can be based on an isolated master data setup, are parallelizable. Otherwise, they are candidates for a serial, periodic test run. Common tools from our practice are eCATT or Cypress. By the way, we are no longer dealing with a scalpel or a pair of scissors, but with a lawnmower – i.e. high coverage, but in case of doubt more tedious troubleshooting, if a bug is actually detected.

- Manual tests – the human factor wins out here. Manual tests are essential, but in any case expensive and should therefore be used sparingly and thoughtfully. If repetitive test cases have been identified, one should look further down the pyramid.

Practical example

In myleo / dsc development, unit and service tests are tightly integrated into the Gitlab pipelines – whenever a commit occurs on a remote branch, the tests run through. These can be run in parallel and do not interfere with each other. Only when there is a green light, a merge to the develop branch may take place, on which in turn the tests are run through again.

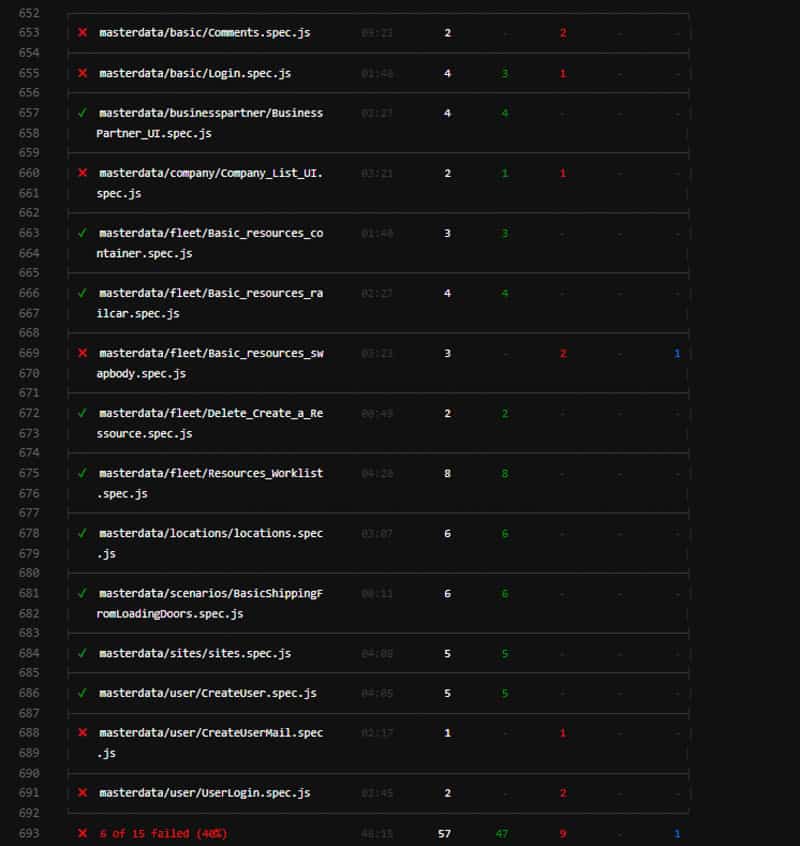

The GUI / integration and API tests take place nightly on the first integration environment. Although these can be run in parallel in individual cases, they often take too long for the pipelines (often one to two hours per module), since the UI is also tested. If errors occur during the night, they are visible in Gitlab’s schedule report in the morning.

A deployment to the preproduction environment is performed every two weeks. The tests are run again here. Only when all errors have been corrected may the release be brought to the production environment.

Manual tests play a weighty role in this process – but more from a UX and technical perspective, less to safeguard against regressions.

Conclusion

When the railroad was invented in the 19th century and the automobile a little later, the increase in speed was flanked by technical safety measures. The human factor was given less attention compared to the technical development of car bodies, seat belts, airbags, ABS and ESP.

This is also comparable with software development: Higher development speed must not shift the quality aspect exclusively onto the developer or software tester. Here, too, reproducible, technical assurance measures are necessary that are systematically integrated into quality assurance.

We are here for you!

myleo / dsc is also open for co-innovation! We would be happy to work with you to develop customized solutions for your individual processes. Have we piqued your interest? Then feel free to contact us. If you have any questions about this or other topics in the blog, please contact blog@leogistics.com.

Uwe Kunath

Development Architect